In the last post we created a cost model of the SoC design process, modeling development stages separately and composing them for the overall design flow. Each stage models a few basic components: baseline development work, iterations (e.g., debug loop), hand-offs to and from other stages, and bug reporting.

This post will discuss use of a single-stage model, a multi-stage model, and implementation details, providing examples from RTL and verification stages.

Applying the cost model

Although the model can be extended to the entire design flow, it does not have to be complete to be useful: specific aspects of a single stage’s debug loop efficiency or hand-off costs between two stages can be analyzed in isolation.

While all of the data needed for the model is generally available, it is not always tracked or may be tracked but not split into the necessary categories. Estimates or ratios of available data are enough to get started with.

For example, we might estimate that 5% of our bugs came from upstream deliveries. Based on estimates of what it costs to detect and isolate the bug we can compute what kind of investment would be worthwhile to reduce or eliminate these upstream bugs.

– Two main uses of the cost model

We don’t know an SoC’s final cost until it is in the marketplace – up until the last minute more problems could be found and additional rework and deliveries may be required.

What we can do are two things:

- retrospective analysis and planning: starting with a completed similar project, evaluate methodology and tool changes.

- track ongoing efficiency metrics: monitoring cost components (effectively Key Performance Indicators) as a project is in execution.

— Retrospective analysis and planning

When planning a new project, we can use data from a similar completed project to evaluate investments to improve efficiency.

Ideally, we would scale the reference project by the new project’s differences in complexity, team experience, any additional quality requirements, etc., but at best complexity and quality are research topics.

What we can do is have a solid basis for doing what-if analysis of the past project, and rely on engineering judgement to do whatever scaling is necessary to make it applicable to the project being planned.

There are also different types of efficiency improvements that can be achieved, for example:

- better quality for the same cost

- the same quality in less calendar time but not necessarily with less cost

- the same quality with less cost but not necessarily less calendar time

- the same quality, trading manual effort for compute or a new tool which might scale better.

The type of efficiency improvement desired may vary within the same project.

This model allows for automation trade-offs, and cost-benefit analysis of tool or capital purchases by converting engineering time, compute, and licensing to dollars. Given the cost and available resources, a “less calendar time” determination can be worked out.

Most efficiency improvement opportunities are within a single stage, with others in the overall flow. Let’s review each below.

— Singe-stage improvement opportunities

Single stage improvements are interesting because they are within the scope of the team owning the stage to identify and implement.

Within a stage, we identified specific cost components: accept and prepare hand-offs, baseline work, iterations (e.g., debug loop), and bug reporting. In each case, to improve efficiency you can:

- reduce the cost of an action

- reduce the number of times you perform the action.

For example, you can reduce the number of bugs to process through the debug loop, you can reduce the time it takes to go through the debug loop, or both.

Let’s look at each of the single stage cost components:

—- Reduce hand-off cost

Automating release/acceptance procedures shrinks the per hand-off cost. Improving IP quality will reduce the need for additional unscheduled releases.

Continuous integration is an interesting case – the idea is to reduce integration time by performing an integration on every code commit.

To restate it in terms of the cost model, the idea is to make the integration loop cost less, by combining it with the normal debug loop and going through it many more times. Whether CI is a win overall can be determined by looking at the data, but care needs to be taken not to negatively impact the individual engineer’s productivity by making the debug loop too much longer.

—- Reducing baseline cost

Approaches that work at a higher level of abstraction such as behavioral models or testbenches also offer possible gains, explaining the popularity of SystemC, and SystemVerilog TestBench (SVTB) language features.

The single biggest way of reducing the baseline cost is through reuse: existing designs, testbenches, infrastructure, tests, verification components, etc. Universal Verification Methodology (UVM) and other libraries are intended to enable increased reuse.

—- Faster cycle time

A number of techniques can be used to compress each component of the debug loop to make a faster overall loop. Here are a few examples:

- linting and static analysis can detect many bugs more quickly than simulation

- assertions are very effective at localizing an error, speeding isolation

- more compute resources could speed the fix/regress component.

Note that there could be fix/regress or other iterations within the overall debug loop that may be worth tracking to improve debug efficiency, but we won’t go to that level of detail in this discussion.

—- Reducing the number of cycles

In the context of the debug loop, fewer cycles means fewer bugs were detected in the current stage, which were either created in this stage or received from an upstream stage.

The comments on base-line efficiency apply here: reuse and higher-level languages create fewer bugs. Techniques like linting and code reviews cause errors to be found and fixed without the debug loop being invoked or the bug logged.

Errors passed from upstream stages can be reduced by better upstream quality and more thorough acceptance criteria. Adding cost to acceptance testing vs. the cost of debugging upstream bugs is a good example of the type of tradeoffs that can be made within a stage.

Another way of reducing debug of upstream bugs is better communication on the state of the release – an integration team will occasionally spend considerable time debugging a feature before discovering it has not been implemented yet.

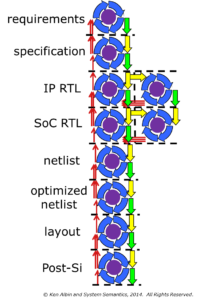

— Multi-stage improvements

While most efficiency improvements can be made within a stage by the team owning the stage, some significant improvement can only be done with benefit of the big picture and may require encouragement from management for cross-team cooperation:

- shift resources to an earlier stage to improve quality

- when planning, focus more on goals than specific tool flows (e.g., X analysis vs. gate-level simulation)

- deliberately plan which environments should be tightly coupled (e.g., to enable faster emulation builds or more VIP reuse) and which should be loosely coupled (e.g., to accommodate separation by organization or site)

- identify and address “stage containment” problems with bugs being discovered downstream, especially more than one stage downstream

- balance the benefit of pushing checks to upstream stages with the impact on the upstream stage.

—- Two notes:

—– Cost-to-fix models

From the data in the cost model we can build project/organization cost-to-fix models as discussed in the cost of bugs post.

Rather than generic curves, from looking at the cost model we can understand that the per stage cost increase is a function of:

- the increased difficulty in detecting and isolating bugs in a downstream context

- rework (additional handoffs of design, testbenches, documentation, etc.)

For each additional stage downstream, the difficulty of debug and amount of rework increase in measurable ways.

—– Rewarding poor quality?

Ultimately, bugs created in a stage must be debugged and fixed in that stage, regardless of the stage they were discovered in.

When a downstream stage performs the detection, isolation, and reporting of bugs, the stage where the error was created only has to perform the fix and possible additional release.

If the cost to the bug creating stage of preparing a release is less than the additional investment that would have been required to detect and isolate the bug (e.g., more compute resources, a more sophisticated testbench, etc.) then it is a net win for that stage to let the downstream stage do the debugging. Not so for the overall project.

Many times there is pressure to make a delivery on time “even if it isn’t perfect.” Perfection is something we don’t normally deal with, but it is worth considering the trade-off between delivery schedule and the increased cost of finding many bugs downstream.

— Track ongoing efficiency metrics

Many of the components of the cost model can be measured during project execution:

- number of bugs detected and created in each stage

- time through the debug loop

- number of deliveries (including requirements and specifications)

- cost per delivery

- cost per acceptance

These are the types of efficiency indicators mentioned in the previous project metrics discussion. This makes sense in that something that is more efficient costs less.

Most of these measures will vary over the course of a project. Further, these measures do not have absolute interpretations, but must be compared with previous projects, or may be compared with a goal target.

Even with these qualifications, these cost components are excellent candidates for Key Performance Indicators (KPIs) to track the overall state of the project.

Implementation

– Partial models are OK

As mentioned at the beginning of this post, the entire design flow doesn’t need to be modeled – breaking a single stage into the cost components can be useful. Also, using ratios (e.g., 10% of the cpu cycles are used for debug) can enable quick early analysis which can be confirmed later with actual data.

– The need for automation

Experience shows that the implementation must be automated – everyone is busy and being asked to collect data manually will be viewed as busywork and will be put off in favor of more urgent tasks. On the other hand, if the data is made available in an accessible form, it will get used.

– Conversion to dollars

The data to be collected is in the form of engineering time, compute resources, and license usage plus counts of deliveries, bugs found, etc. Providing this model to teams and engineers helps them understand trade-offs and encourages them to look for efficiency improvements.

Engineering time, compute resources, and license usage can all be converted to dollars to enable automation trade-offs, and cost-benefit analysis of tool or capital purchases, however actual dollar amounts may be business sensitive information. If dollar amounts do need to be used in general discussion within the project, conservative numbers (e.g., entry-level salaries or list prices of tools) which are generally known and less sensitive can be used to illustrate the trade-offs.

For many tools, license costs can be combined with compute costs as part of a dollars per core per time unit. For some big ticket licenses or interactive tools it may make more sense to track them separately (e.g., on a per user basis).

– Data mining from different sources

For most of the analysis, we have to get data from a number of different sources such as:

- bug counts from issue tracking system(s)

- design change information from version control system(s)

- compute usage statistics from the batching system

- license usage statistics, especially for interactive tools

- staffing estimates or headcount tracking

- project phase, number of deliverables, etc. from project management

If cost components are going to be monitored during project execution or there will likely be multiple revisions of project statistics, it would make the most sense to pull the data into a data warehouse as described in the project metrics post. From there is can be pulled into a spreadsheet or other analysis tool to evaluate trade-offs.

On the other hand, a completed project’s final data could be collected one time manually and placed directly into a spreadsheet. This would be sufficient to enable trade-off analysis but would generally not have the historical trends to use for reference in the new project.

– Counting things

Most of the costs we need to compute require looking at different sources and computing averages or making estimates based on engineering judgement. Here are two examples:

— Counting releases

Releases often only show up explicitly in Gant charts and status reports, but are generally associated with version control tagging, tell-tale naming conventions in regressions, etc. Depending on the release and accept flows being used, other tool use may need to be tracked as well. Engineering time is seldom tracked to the level of detail of a release, but can be reasonably estimated and corroborated with time stamps from associated compute activity.

Note that if estimates are being used, the first release and accept costs are typically more than subsequent ones. The higher initial costs can be tracked separately or accounted for in the baseline costs.

— Counting bugs

We need to distinguish between bugs created and fixed in the same stage from bugs passed from one stage to another. This can be done with attributes in the bug record (“stage detected”, “stage introduced”, and possibly “stage fixed”) or through commit comments or other issue tracking system mechanisms.

The cost for detecting a bug may include stimulus, coverage, simulations, triage, property checking, etc. Because it is common to be working on more than one bug at a time, it reasonable to compute a cost average: amount of work on a particular release or over a period of time divided by the number of bugs.

Summary

This cost model is a different way of looking at design flows, providing a rational basis for methodology and tool decisions. It turns out that data needed for the cost model is mostly the same data needed for efficiency metrics.

While a model can be built for the entire design flow, modeling components of a single stage (e.g.,hand-off costs or the debug loop) can provide value immediately.

While some decisions are obvious even using conservative estimates, others require more precise project data which generally exists but may not be tracked.

© Ken Albin and System Semantics, 2014. All Rights Reserved.